Exploration of fast convergence of Genetic Algorithms

Let's Evolve

Code for this experiment can be found here

Introduction

Selection, Crossover and Mutation are three important operators [2] of a Genetic Algorithm (GA). In this report we investigate the effects of Crossover, Mutation and population size in a search task. Previous studies have carried out to examine the effects of Crossover in Genetic Programming evolving neural networks[3] and found that Crossover to be an essential operator for fast convergence. Our hypothesis is that a GA with Crossover finds solutions faster to a search problem than a GA without. Here we look at Dawkin’s weasel problem, where it hypothesises that what drives the evolution is random variations (Mutation) combined with non random cumulative selec- tion(Crossover), rather than the random variation itself. The goal of the search task is to evolve a string of 28 characters that says methinks it is a weasel starting from a collection of strings with random characters

Method

We encode the genotype with printable ASCII characters (32 to 126) [5], altogether 95 possible characters forming the alphabet. Fitness F of each individual is computed to be the sum of absolute distances of each character Ci from the target character Ti. We negate this as our GA is programmed to find the maximum fitness.

$$ F = \sum_i{abs(T_i - C_i)} $$

There are other ways to compute the fitness, for example, Minimum Edit Distance[1] or a much simpler measure would be counting the number of matching characters.

For each Generation we compute the Fitness of the whole population and for statistics we record max Fitness and also the overall max Fitness. We select two individuals at random and assign Winner or Loser status depending on their Fitnesses. Selection process is slightly different from the norm - i.e. comparing half of Population selected at random to the other half and applying Crossover and Mutation to the Loser individuals. Although this only generates half the number of Population as offsprings, it is computationally e cient as Fitness is computed only once per Generation, using matrix operations. There are many ways Crossover operation is performed. Here we select One-Point Crossover where a Crossover site is randomly generated and part of Winner’s corresponding genes are copied over to Loser’s chromosome. Mutation is done by applying a small change at a randomly selected loci. The change we apply is randomly selected +1 or -1, to the ASCII encoded gene which is an integer value, within our ’alphabet’ bounds (32-126 ASCII characters).

Effect of Crossover

For the first experiment, we execute this algorithm for GenerationCount Generations or until it converges, with a range of values for Crossover probability (pCrossover), keep- ing the same Mutation probability (pMutation). For each pCrossover value we run the same experiment RepeatCount times and report the mean Generation count it takes to converge.

We used the parameters in Table 1 for the first experiment

| Setting | Value |

|---|---|

| Population Size | 3000 |

| Max Generations | 1000 |

| pMutation | 0.036 |

| pCrossover | 0.0 to 1.0 with step 0.2 |

Table 1 GA Parameters

Results

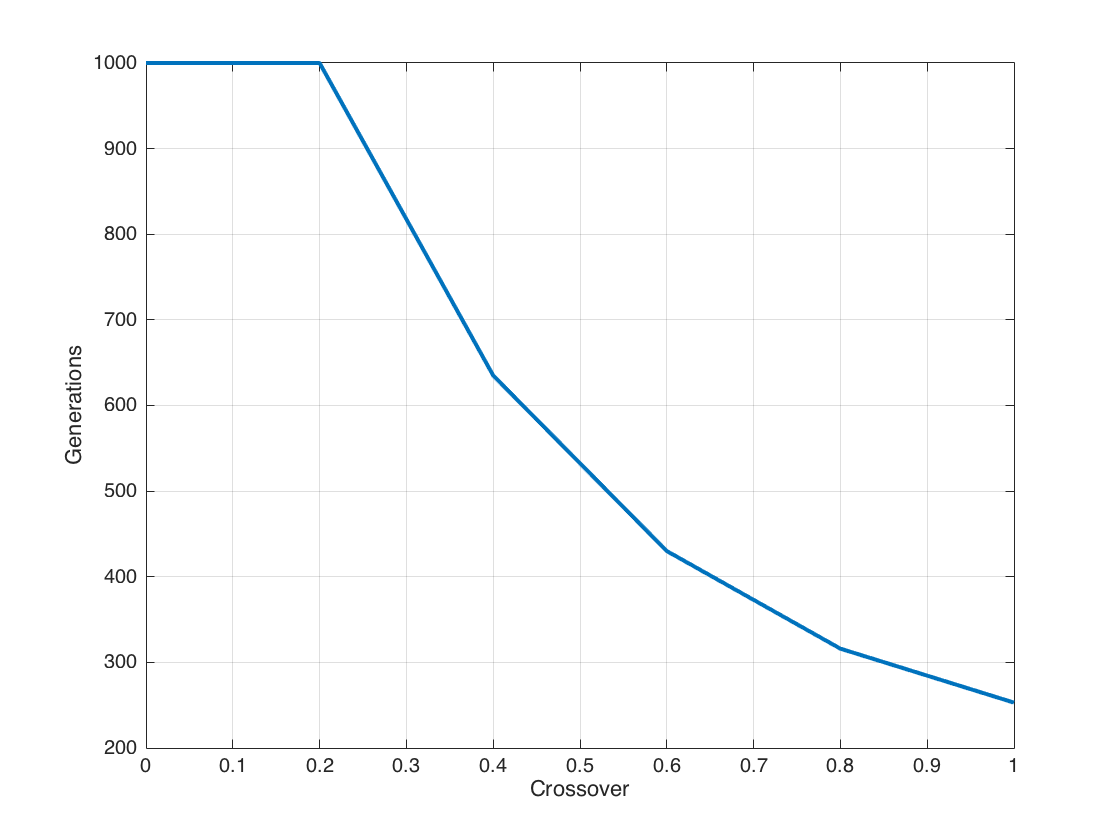

As expected the when the Crossover increased Figure 1, the average generations required for convergence decreased. At lower levels of Crossover (0.0 and 0.2) there was no convergence during the 1000 max generations we ran the algorithm.

Discussion

Figure 1 Effect of Crossover on convergence

With higher probability of Crossover, there’s better chance of winning genes to get into the next generation. This essentially speeds up the process of finding the maxima. There’s a danger in making the Population homogenous with higher pCrossover and converging into a local maxima. In a more complex fitness landscape this would be more apparent. In this case a technique like demes where the population is dispersed into overlapping groups[4] may be useful.

Effect of Population size

In this experiment we aim to explore the effect of Population size on the convergence. We ran the experiment for a range of values for Population size keeping pMutation and pCrossover steady. Parameters used are shown in Table 2

Results

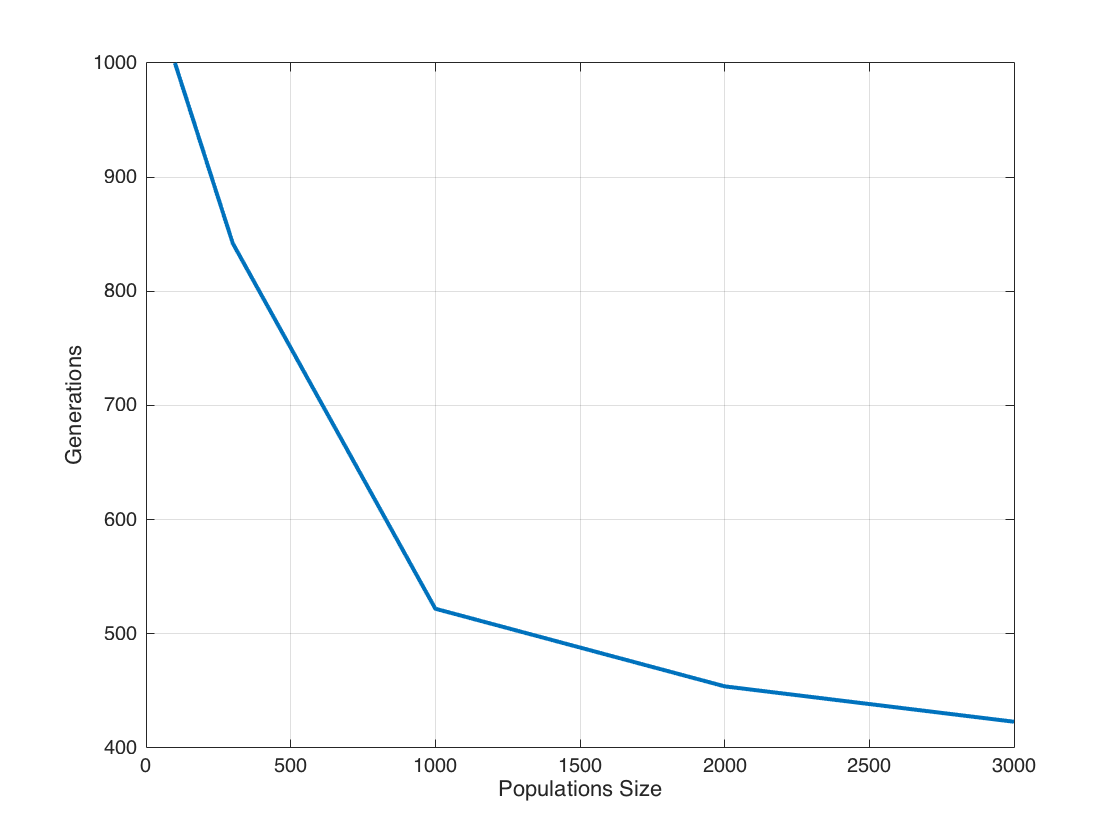

Algorithm was unable to find a convergence with a Population of 100 but with the rest of the Population sizes tested, convergence speed increased near exponentially as shown in Figure 2

Figure 2 Effect of Population size on convergence

| Setting | Value |

|---|---|

| Population Size | 100, 300, 1000, 2000, 3000 |

| Max Generations | 1000 |

| pMutation | 0.036 |

| pCrossover | 0.6 |

Table 2 GA Parameters

Discussion

With a larger Population there is a higher probability of individuals spreading across the fitness landscape. This reduces the chance of the system to get stuck in a local maxima for too long.

Dynamics of Crossover and Mutation

In this experiment we aim to explore the dynamics of pMutation and pCrossover on the con- vergence speed. We ran the experiment for a range of values for pMutation and pCrossover keeping the Population size steady. We increased the count of generations the experiment ran for in order to clarify convergence. Parameters used are shown in Table 3

| Setting | Value |

|---|---|

| Population Size | 3000 |

| Max Generations | 5000 |

| pMutation | 0.0 to 0.05 step 0.005 |

| pCrossover | 0.0 to 1.0 step 0.2 |

Table 3 GA Parameters

Results

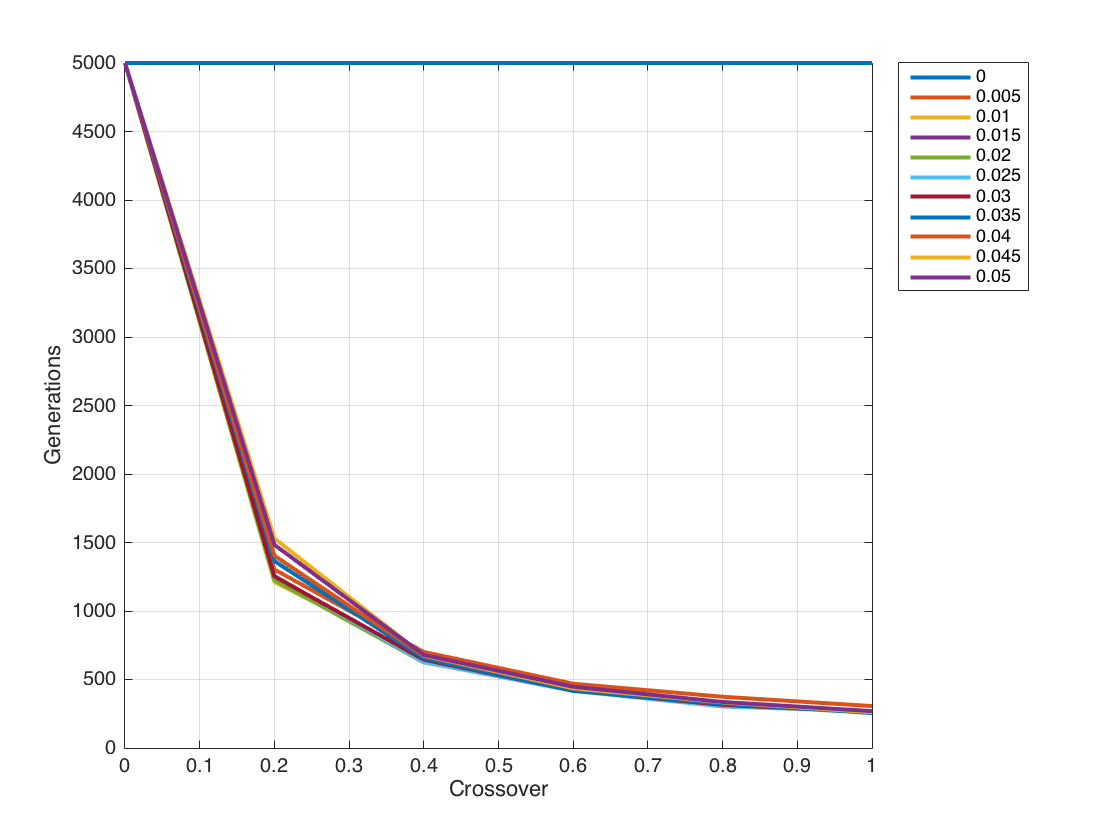

Algorithm was unable to find a convergence with pMutation 0.0 for all values of pCrossover and similarly with pCrossover 0.0 for all values of pMutation. For all other values of pMutation and pCrossover, convergence speed increased near exponentially with increased pCrossover. as shown in Figure 3

Discussion

Crossover and mutation are two essential parts of a GA. Crossover pushes the system towards the winning direction in larger steps while mutation explore the fitness landscape with finer granularity. It may be possible for mutation alone to achieve convergence but this would invariably take a very long time, far more than the 5000 generations we tested for. Similarly without mutation, Crossover may achieve convergence with a larger number of generations. This behaviour can be attributed to larger steps it takes creating oscillatory behaviour, but eventually stumbling upon the maxima.

References

[1] Lloyd Allison. Dynamic programming algorithm (dpa) for editdistance. Algorithms and Data Structures Research & Reference Material. School of Computer Science and Software Engineering, Monash University, Australia, 3168, 1999.

[2] Melanie Mitchell. An introduction to genetic algorithms. MIT press, 1998.

[3] William M Spears and Vic Anand. A study of crossover operators in genetic program- ming. Springer, 1991.

[4] Lee Spector and Jon Klein. Trivial geography in genetic programming. In Genetic programming theory and practice III, pages 109–123. Springer, 2006.

[5] Wikipedia. Ascii printable characters, 2015. [Online; accessed 22-October-2015].